Table of contents

Data tokenization and masking protect personal information and enable compliance. Learn where and when to employ each – and how business entities can help.

Why data tokenization vs masking?

The requirement to secure sensitive data is mainly dictated by company policy, and regional legislation, including GDPR, CCPA, PCI-DSS, and PHI.

As a rule, organizations protect sensitive data, and ensure data privacy compliance, in 2 ways: data tokenization vs data masking (aka data anonymization).

This article reviews data tokenization tools and data masking tools, explains their similarities and differences, their advantages and disadvantages, and how a business entity approach optimizes their performance.

Comparing data tokenization vs masking

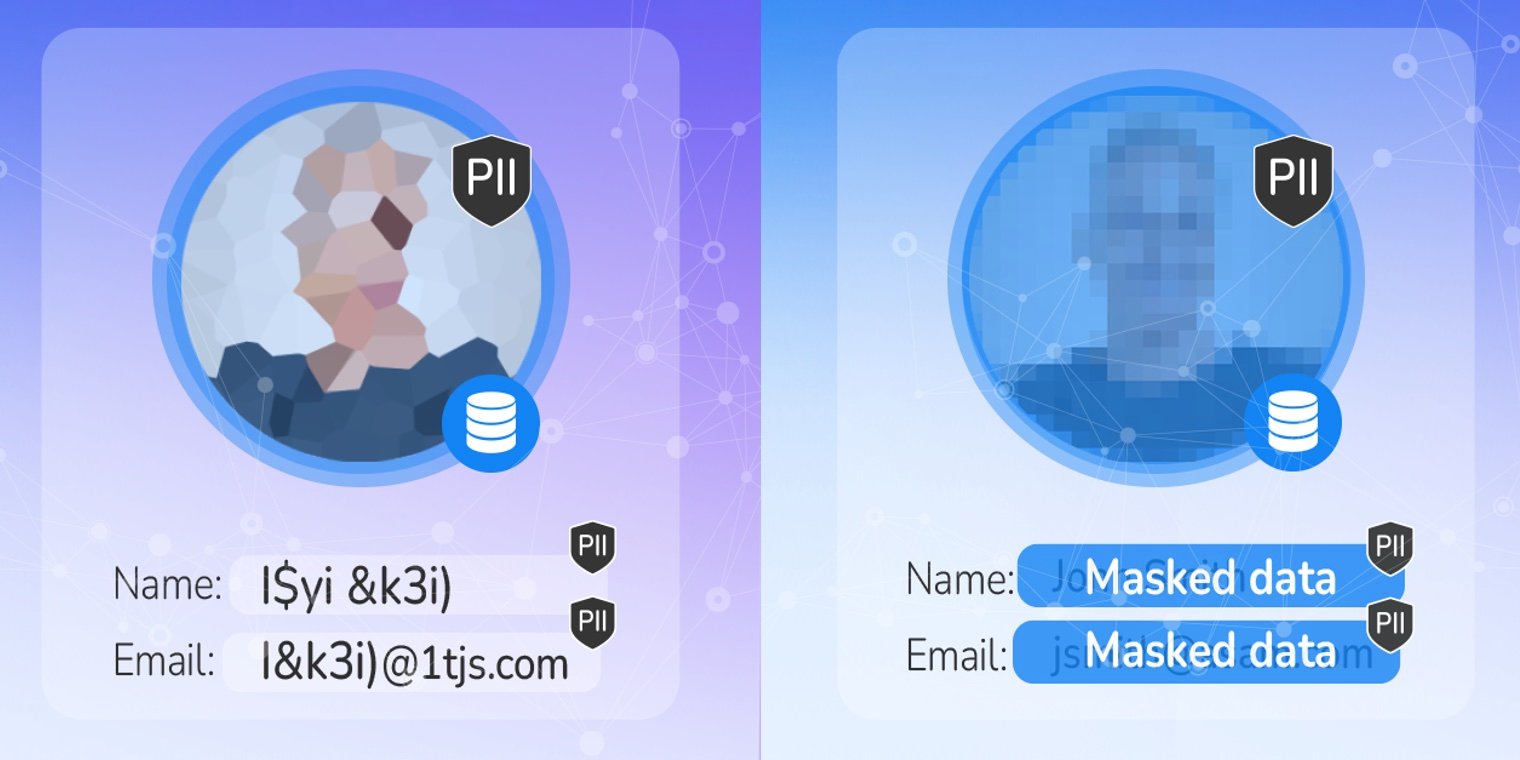

In the data tokenization vs masking equation, both are an effective means to securing sensitive data, such as PII (Personally Identifiable Information), and complying with data privacy laws. But you should understand similarities, as well as their differences.

Data tokenization defined

The tokenization of data obscures the meaning of the information by substituting its values for meaningless symbols, or tokens, which are then used in internal systems or databases. Data tokenization use cases and best practices call for the replacement of sensitive data – in databases, data stores, internal systems, and applications – with randomized elements, like a line of characters and/or digits, that have no value in case of a data breach.

Effective data tokenization software secures data in motion, and at rest. If a an authorized data consumer, or application, requires the actual data, the token can be de-tokenized to its original state.

Data masking defined

There are various types of data masking, but all are involved with replacing actual data with fictitious, yet equivalent, data – in order to conduct business processes. This modified version of the data cannot be reverse-engineered, or identified. This normally involves replacing sensitive information with scrambled values, with no way to retrieve the original version.

Note that the new version of the data is still valuable to authorized personnel and software, it’s meaningless to unauthorized users. Anonymized data ensures relational integrity, and usability, across different analytics platforms and databases – dynamically or statically.

Here’s a 1:1 comparison:

|

Data tokenization |

Data masking |

|

|

What does it do? |

|

|

|

What capabilities does it have? |

|

|

|

Does it preserve relational integrity? |

|

|

|

Can it be undone? |

|

|

Advantages and disadvantages of each

As per the differences listed above, each of these methods comes with advantages and disadvantages:

Data tokenization

Advantages

-

Business continuity

Tokens are format-preserving to ensure business continuity. -

Reduced effort to comply with privacy laws

Because data tokenization reduces the number of systems that manage sensitive data, the effort required for privacy compliance is minimized.Reduced cost of encryption -

Lower security risk

Every instance of sensitive data is replaced with tokens with no meaningful value, so even in the event of a data breach, PII is never revealed. -

Reduced cost of encryption

Only the data within the tokenization system is encrypted, so it’s no longer necessary encrypt all the other databases.

Disadvantages

Data tokenization relies on a centralized token vault (where the original data is stored), resulting in:

-

Compromised relational integrity and formatting

Many current data tokenization solutions experience difficulty ensuring referential and formatting integrity of tokens across systems. -

Possibility of bottlenecks, when upscaling

With some system architectures, a centralized token vault can inhibit scalability, so the availability vs performance ratio must be considered. -

Increased risk of a massive breach

If an attacker gets into the encrypted vault, all sensitive data is at risk. Therefore, tokenization servers are stored separately in non-disclosed, secure locations.

Data masking

Advantages

-

Data sanitization

Data sanitization replaces the original sensitive data with a masked version, instead of deleting files (which might leave traces of the data in the storage media). -

Preserved data functionality

Masking maintains the functional properties of the data, while making that same data useless to an attacker. -

Reduced security risk

Data masking addresses several security scenarios, including data exfiltration, loss of data, insider threats, non-secure 3rd-party integrations, and risks related to cloud adoption.

Disadvantages

-

Difficulty interpreting unique data

If the original sensitive data is unique (such as a bank account number, telephone number, or Social Security Number), the masking system must be able to interpret this, and provide unique masked values that will be used consistently across all systems. -

Difficulty maintaining semantic and gender context

The data masking system must understand the semantics, or meaning, of the data. Similarly, it must maintain gender-awareness whenever names are replaced, or market segmentation might be affected. -

Difficulty assuring relational integrity, and preserving formats

To replicate a meaningful, masked version of production data, the masked data must maintain the context of the information. If it can’t, it might not assure relational integrity, or preserve formatting.

Which is right for you?

When comparing data tokenization vs masking, note that one approach isn’t inherently better than the other. Both protect sensitive data (like PII), to comply with privacy laws.

Your use cases will determine which method is best. Begin by answering the following questions:

-

Where and when is your sensitive data most at risk?

-

Which privacy regulations apply?

-

What are the greatest vulnerabilities to the data?

Accurate responses to these questions indicate whether data tokenization or data masking is best for your specific needs and architecture.

Best of both worlds with business entities

The entity-based data masking technology encompasses both data tokenization software and data masking software. It creates a data pipeline for all of the data of a specific business entity – such as a customer, vendor, or order – to authorized data consumers. The data for each instance of a business entity is persisted and managed in its own individually encrypted Micro-Database™.

Entity-based data masking provides for structured and unstructured data masking inflight, while preserving relational integrity. Images, PDFs, text files, and other formats that contain sensitive data are secured with static and dynamic data masking capabilities.

With regard to data tokenization solutions, the business entity approach basically eliminates the risk of a massive data breach involving a centralized data vault, scaling bottlenecks, and any compromise in formatting and referential consistency.

In conclusion, business entity approach automatically discovers, tokenizes, and masks data, and persists it in individually encrypted Micro-Databases, simplifies data governance, compliance, and security, for a more cost-effective, and complete data integration solution. And a single approach is preferred to multiple disparate solutions from data tokenization and data masking vendors.

Check out K2view data masking tools, the only

ones with both masking and tokenization inside.