Accelerate your path to data value delivery

always up to date

Auto-discover, classify, and activate your metadata

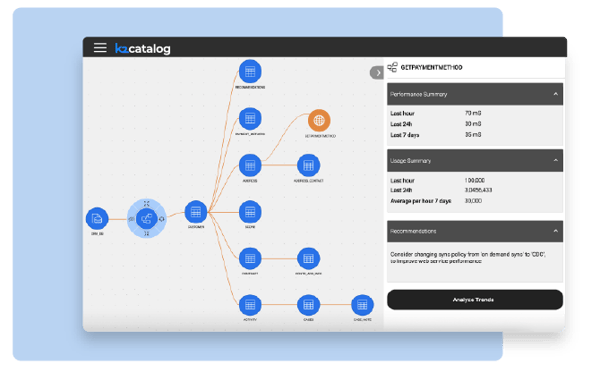

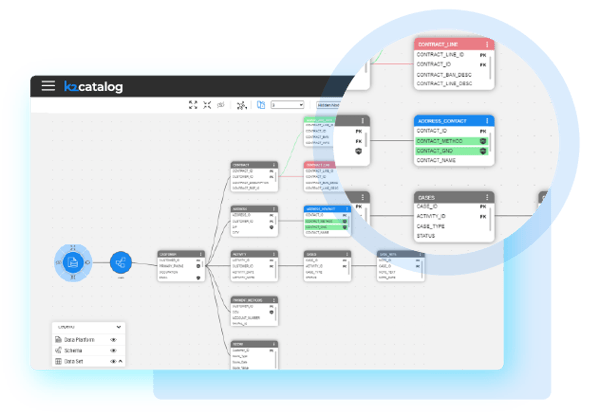

K2view Data Catalog keeps an always-current inventory of the organization's data assets, and visualizes the relationships between them – enabling data teams to easily find and understand data.

-

Data source crawlers scan underlying sources.

-

AI-automated data discovery registers databases, tables, and fields in the catalog.

-

AI-automated data classification for each data element.

-

Intuitive, elegant visualization of your data assets and relationships.

-

Interoperable with 3rd-party enterprise data catalog tools.

-

And leveraging AI, implementing K2view is quick and easy.

IMPLEMENT WITH AGILITY

Quick and easy setup

K2view Data Catalog is quick to set up with AI that helps discover, classify, visualize, and activate metadata, giving data teams an always-current view of enterprise data assets and the relationships between them.

-

Connect to any source: Use extensible crawlers and plugins to scan relational and non-relational systems across the data landscape.

-

Map relationships automatically: Detect links between tables and entities using SQL analysis, field semantics, data patterns, and key analysis.

-

Enrich metadata: Generate descriptions, tags, classifications, and confidence scores that improve search, governance, and understanding.

-

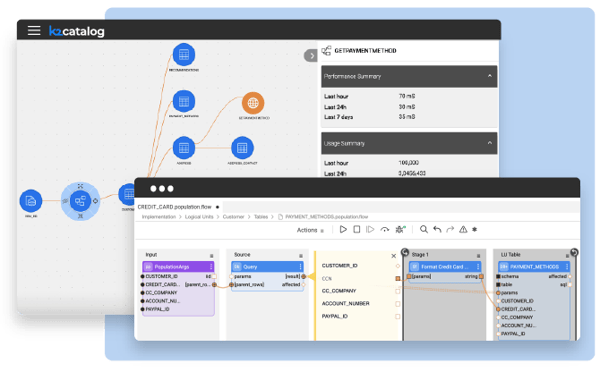

Create data products: Use catalog intelligence to generate entity models, ingestion flows, masking rules, quality functions, and APIs.

accelerate data product creation

Data catalog-driven automation

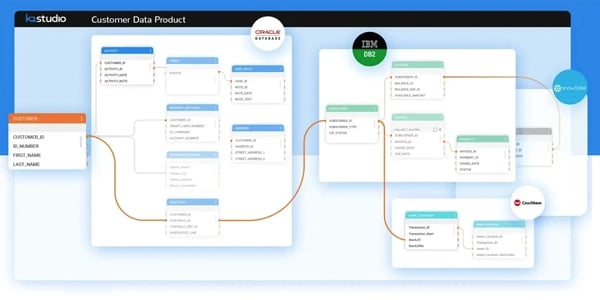

K2view Data Catalog uses AI to accelerate the implementation of data products by automating many of the data product design and engineering activities, including:

-

Auto-generating your data product schema.

-

Auto-generating data product ingestion flows based on the data product schema.

-

Auto-applying data masking functions to protect PII data.

-

Applying relevant data quality functions based on the data classifications.

-

Auto-generating web service APIs to expose data ingested by a data product to authorized data consumers.

K2view Data Catalog serves as the backbone data registry for creating data products in a federated data mesh or centralized data fabric architecture.

embrace change with speed

Automated change management

K2view Data Catalog identifies and alerts on schema drifts in your data sources. Moreover, it automatically propagates the changes in your data product implementation, enabling seamless change management.

-

Full version management of the data catalog, with schema drift visualization to highlight changes between versions.

-

Accept or reject auto-discovered changes into the catalog.

-

Roll back to prior versions.

- Update the data product schema, ingestion flows, data governance functions, and web services – in minutes.

Key features and capabilities

K2view Data Catalog Tools

Auto-discovery

Auto-discover your data assets, regardless of data source

Auto-classification

Automatically classify your data assets to enable quick search and understanding

Catalog versioning

Keep track of your changes with version management and rollback capabilities

Passive and active metadata

Visualize both passive (design time) metadata and active (runtime) metadata

Data product model automation

Generate the data product schema directly from your data catalog

Automated data ingestion

Data ingestion flows are auto-generated based on the data product model

Graph database

Enable enterprise scale and flexibility with a modern, graph DB architecture

Interoperability

Share K2view data catalog metadata with other 3rd-party enterprise data catalogs