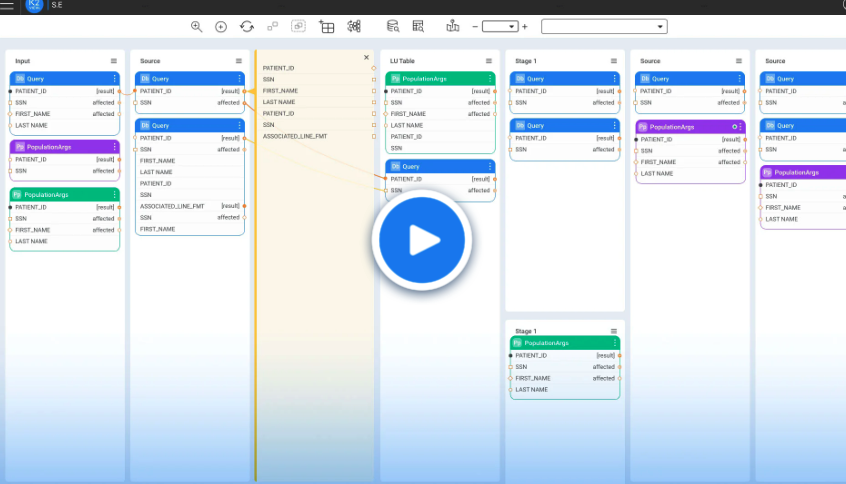

The enterprise needs a single data preparation solution for offline and real-time operational use cases. The biggest obstacle to realizing these benefits is the lack of simple-to-use, self-service tools that can quickly and consistently prepare quality data. That’s because most big data is prepared database by database, table by table, and row by row, joined with other data tables through joins, indexes, and scripts. For example, all invoices, all payments, all customer orders, all service tickets, all marketing campaigns, and so on, must be collected and processed separately. Then complex data matching, validation and cleansing logic is required to ensure that the data is complete and clean–with data integrity maintained at all times.

A better way is to collect, cleanse, transform, and mask data as a business entity—holistically. So, for example, a customer business entity would include a single customer’s master data, together with their invoices, payments, service tickets, and campaigns.

K2View Data Fabric supports data preparation by business entities.

It allows you to define a Digital Entity schema to accommodate all the attributes you want to capture for a given business entity (like a customer or an order), regardless of the source systems in which these attributes are stored. It automatically discovers where the desired data lives across your enterprise systems, and it creates data connections to those source systems. K2View Data Fabric collects data from source systems and stores it as an individual digital business entity, each in a separate encrypted micro-database, ready to be delivered to a consuming application, big data store, or user.

The solution keeps the data synchronized with the sources on a schedule you define. It automatically applies filters, transformations, enrichments, masking, and other steps crucial to quality data preparation. So, your data is always complete, up-to-date, and consistently and accurately prepared, ready for analytics or any operational use case you can conceive.

Looking at your data holistically at the business entity level ensures data integrity by design, giving your data teams quick, easy, and consistent access to the data they need. You always get the insights and results you can trust because you have data you can trust.

Why you should prepare data by business entity