Table of contents

Enterprise Large Language Models (LLMs) using Retrieval-Augmented Generation (RAG) enhance the accuracy and context of their responses with generative AI.

What is an enterprise LLM?

An enterprise LLM is a large language model employed by enterprises. Not only is it trained externally on vast amounts of textual information (typically billions or trillions of words), but it's also grounded internally with the trusted private data of your company or organization. By studying all this information and data, the model learns the intricate patterns and complex relationships that exist between words and ideas, enabling it to communicate more effectively with business entities like customers, employees, or vendors .

RAG in a nutshell

Retrieval-augmented generation (RAG) is a generative AI framework that augments LLMs with fresh, trusted data retrieved from authoritative internal knowledge bases and enterprise systems. The end results are more informed and reliable LLM responses. The LLM AI learning process enabled by RAG is a key factor in differentiating private enterprise LLMs from public consumer models.

Enterprise LLM-RAG challenges

Since its conception in 2020, RAG has been based on the retrieval of documents from enterprise knowledge bases.

In a December 2023 report on conversational AI, Gartner estimates that it will take a few years for an enterprise LLM to benefit fully from RAG due to challenges like:

-

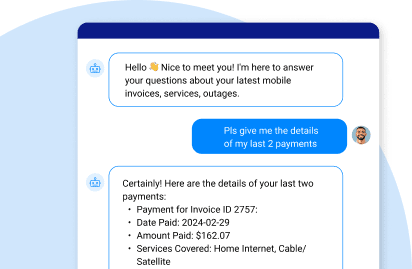

Applying generative AI to self-service customer support mechanisms, like a RAG chatbot

-

Building retrieval pipelines into applications

-

Combining insight engines with knowledge bases to run the retrieval function.

-

Indexing enterprise data and docs via embedding, pre-processing, and/or graphing solutions.

-

Hiding sensitive data from people who aren’t authorized to see it.

All of the above are problematic for enterprises, according to Gartner, due to data sprawl, ownership issues, skillset gaps, and technical restrictions.

In a subsequent January 2024 Gartner RAG report on augmenting large language models with internal data, the analysts advise enterprises to:

-

Choose a pilot use case, in which business value can be clearly measured.

-

Classify the use case data, as structured, semi-structured, or unstructured, to decide on the best ways of handling the data and mitigating the risk.

-

Get all the metadata you can, because it provides the context for your RAG deployment and the basis for selecting your enabling technologies.

Get the recent Gartner report on LLM-RAG implementations FREE of charge.

The enterprise LLM-RAG connection

Enterprise LLMs are used by RAG to execute the framework's retrieval and generation capabilities by:

-

Sourcing

The first step in any enterprise LLM-RAG solution is data sourcing, usually from internal text documents and enterprise systems. The source data is basically your company’s knowledge base that the retrieval model sifts through to locate and aggregate relevant information. To ensure accurate, diverse, and trusted data sourcing, data redundancy must be minimized.

-

Unifying data for retrieval

You should organize your data and metadata in such a way that RAG can instantly access it. For example, your customer 360 data – including master data, transactional data, and interaction data – should be unified for real-time retrieval. Depending on your use case, you may have to arrange your data according to other business entities, like employees, products, suppliers, or anything else that’s relevant to that workload.

-

Chunking documents

For the retrieval model to work effectively on unstructured documents, divvying up the data into more manageable chunks is advisable. Proper chunking can improve retrieval performance and accuracy. For example, a document may be a chunk on its own, but it could also be chunked down further into chapters, paragraphs, sentences, or even words.

-

Embedding (converting text to vector formats)

The text found in documents must be converted into a format that RAG can use for search and retrieval. This process, called embedding, might entail transforming the text into vectors that are stored in a vector database. The embeddings are linked back to the source data, leading to more accurate and meaningful responses.

-

Protecting sensitive data

Unauthorized users should never be given access to the sensitive data retrieved by RAG. For example, a salesperson should never see a customer’s credit card information, and a customer service agent should never see a caller’s Social Security Number. To achieve this, your RAG solution should have dynamic data masking capabilities and role-based access controls built in.

-

Generating the prompt

Your RAG solution should automatically generate an enriched prompt by creating a story out of the retrieved 360-degree data. And there needs to be an ongoing tuning process for prompt engineering, facilitated by Machine Learning (ML) models of possible.

Enterprise LLM-RAG benefits

Enterprise LLMs benefit from RAG in the following ways:

-

More rapid and cost-effective time to value

Training an LLM is very time-consuming and costly. RAG makes GenAI accessible and reliable for customer-facing operations by offering a quicker and more affordable way to introduce new data to the LLM.

-

Personalization of user interactions

By integrating a specific 360-degree dataset with the extensive general knowledge of the LLM, RAG personalizes user interactions via chatbots, and customizes marketing insights like up-sell and cross-sell recommendations by human beings.

-

Enhanced user trust

RAG-powered LLMs generate reliable information via a combination of data accuracy, freshness, and relevance – personalized for a specific user. User trust protects and elevates the reputation of your brand.

Generative data products to the rescue

Generative data products are powering the enterprise LLM-RAG revolution. These AI-generated, reusable data assets combine clean, compliant, and current data with everything you need to make them quickly and easily accessible to qualified users.

A generative data product injects trusted, internal enterprise data into your RAG framework in real time. The combination of reliable inputs and speed lets you integrate your customer 360 or product 360 data from all relevant data sources, and then turn that data and context into relevant prompts. These prompts are automatically fed into your enterprise LLM along with the user’s query, enabling the model to generate a more precise and personalized response.

With a data product platform, generative data products can be accessed via API, CDC, messaging, or streaming – in any combination – allowing for data unification from multiple source systems. A data product approach can be applied to multiple RAG use cases – delivering insights derived from an organization’s internal information and data to:

-

Speed up issue resolution

-

Design hyper-personalized marketing campaigns

-

Generate personalized cross-/up-sell recommendations for call center agents

-

Detect fraud by identifying suspicious activity in a user account

Discover GenAI Data Fusion, the K2view RAG tool

that turns a generic LLM into unique enterprise LLM.

.jpg)