Table of contents

With firms being held liable for their chatbot interactions, it's up to AI to ensure accurate answers. Having to rely a human in the loop is a non-starter.

GenAI growing pains

As the business world increasingly relies on Retrieval-Augmented Generation (RAG) to power GenAI data apps for customer service (and more), questions of accountability persist. Who’s responsible for the nature of the interaction and the accuracy of the information provided by customer service chatbots?

A recent customer service chatbot caught swearing and disparaging the company that deployed it is an example of how GenAI can be a brand liability. Further, a Canadian court recently found that GenAI chatbots can be legal and fiscal liabilities, too.

A Civil Resolution Tribunal (the Canadian equivalent of the US Small Claims Court) recently ruled that Air Canada must compensate a customer due to misinformation provided by the airline's chatbot.

The potential value of AI data-driven apps is clear. But obviously there’s something fundamentally flawed in how most of today's customer-service chatbots function. The question is, can chatbots be made more dependable, and less prone to AI hallucinations, without having to have a human in the loop?

Air Canada case study

The story begins when Mr. Jake Moffatt, faced with the sudden loss of his grandmother, needed to book an urgent flight to attend the funeral. Most airlines have what’s referred to as a bereavement policy, under which flights for recently bereaved relatives are heavily discounted.

Seeking assistance to book such a bereavement flight, Moffatt turned to an Air Canada chatbot, a virtual customer service agent powered by RAG conversational AI. Unfortunately, the chatbot provided inaccurate information. It directed Moffatt to purchase full-price tickets, falsely claiming that he would be refunded in accordance with the airline's bereavement fare policy. Trusting the chatbot's guidance, Moffatt ended up paying over $1,600 for a trip that would have only cost around $760 under the actual bereavement policy.

When Air Canada refused to refund Moffatt the difference, he decided to sue. In court, Air Canada attempted to deflect responsibility for the chatbot's misleading advice, claiming that the RAG chatbot functioned as a separate legal entity which was not under their direct control. The presiding judge swiftly rejected that claim, pointing out that the chatbot is an integral part of the airline’s website – and that Air Canada is ultimately responsible for all information disseminated through its official channels, regardless of whether it originates from a static webpage or from an interactive chatbot – or from a human in the loop.

The court ordered Air Canada to pay Mr. Moffatt a total of $812, including damages incurred, pre-judgment interest, and court fees. This landmark case is believed to be the first in Canada where a customer successfully sued a company for misleading advice provided by a chatbot.

The broader implications of no human in the loop

The Air Canada case is a cautionary tale for businesses that increasingly rely on generative AI applications and AI data readiness to streamline their customer service operations, instead of having a human in the loop.

This incident highlights the critical need for thorough testing and verification of information delivered by chatbots, particularly those using advanced generative AI technology. Chatbots based on active retrieval-augmented generation can access private enterprise data and knowledge bases to accurately respond to customer queries without human intervention. The problem is, not all chatbots have been configured or equipped for RAG tools.

Further, as the Moffatt case demonstrates, even the best data-driven chatbot can pose a significant liability if the right LLM guardrails aren't in place. The question is – which safeguards are most effective, efficient, and practical?

Human in the loop vs RAG tools

Experts predict a rise in legal disputes related to AI-powered chatbots as companies continue to integrate them into their customer service strategies. Clearly, significant improvements are necessary in chatbot technology. Today, 3 primary approaches accomplish this: Having a human in the loop (HITL) vs retrieval-augmented generation vs fine-tuning.

HITL

Having a human in the loop is just what it sounds like: Actual people managing the data used to train the chatbot and monitoring its interactions with users. Human involvement allows for the identification and correction of errors and biases on the fly, which ultimately leads to more accurate and trustworthy chatbot responses. But HITL comes at a high price. It’s expensive to train and maintain all those human resources and doesn’t scale well for businesses with high volumes of customer interactions.

RAG

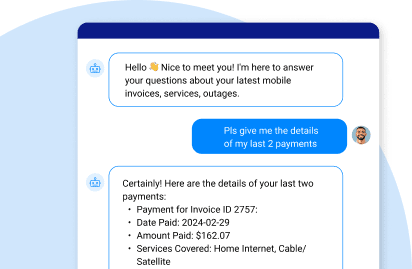

Market-leading RAG tools, like GenAI Data Fusion by K2view, inject enterprise data into Large Language Models (LLMs) in real time to have chatbots deliver responses that users can trust. It assures AI data quality using sophisticated prompt engineering techniques – dramatically increasing customer intimacy and trust.

Fine-tuning

Fine-tuning is used to adjust a pre-trained model to focus its capabilities on a unique task. An LLM is trained on enormous amounts of data to learn general language patterns. Fine-tuning further trains the model on a narrower dataset for specific generative AI use cases, such as customer service, or code generation, or medical research.

Which approach, or combination of approaches, you choose will be determined by the requirements of the use case you're addressing.

Get the IDC report on closing the GenAI data gap for free.

The bottom line

The Air Canada case study highlights the critical need for businesses to ensure accurate and trusted chatbot responses. While having a human in the loop can improve accuracy, it’s impractical for high volumes of customer interactions. Field-proven enterprise RAG solutions offer a more scalable, viable, and reliable approach to ensuring AI personalization.

While generative AI offers immense potential to revolutionize customer service, proper safeguards are essential. By ensuring chatbot response accuracy, businesses can leverage the power of AI responsibly, avoid legal liability, and ensure a positive and rewarding customer experience.

Get to know K2view GenAI Data Fusion, the suite of

RAG tools that doesn't require a human in the loop.

.jpg)