Table of contents

In my previous post, I argued that most agentic AI systems don’t fail in production because large language models aren’t smart enough. They fail because production environments are unforgiving.

Since then, I’ve spoken with teams building operational AI systems across industries and use cases. Despite the diversity, the same core challenge keeps surfacing.

The hardest problem in agentic AI isn’t reasoning.

It’s context.

Reasoning is improving faster than context

Large language models are advancing rapidly. Planning, reasoning, and decision-making capabilities continue to improve. Agent frameworks are maturing. Tooling is evolving.

But the way we provide context to AI agents hasn’t kept pace.

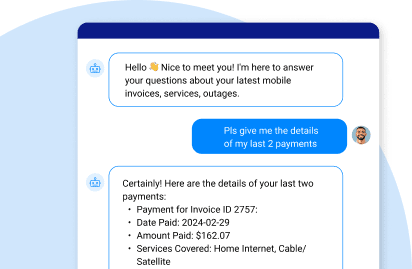

In real-world environments, context isn’t a static prompt or a retrieved document. It’s a dynamic, real-time representation of what’s happening right now—across multiple enterprise systems—for a specific business situation.

That’s where things begin to break.

Context in production is fragmented by design

Enterprise data was never built for AI agents.

Customer data lives in CRM systems.

Billing data resides elsewhere.

Operational state is stored in transactional systems.

Historical data is spread across warehouses and logs.

Unstructured knowledge sits in documents and policies.

To answer even a simple operational question, an AI agent often has to assemble context from all of these sources in real time.

In demos and POCs, this complexity is hidden. Data is pre-selected. Assumptions are hard-coded. Governance is relaxed.

In production, none of that holds.

The cost of assembling context at runtime

When context is built dynamically from fragmented systems, several issues emerge.

Latency increases.

Each additional system call, join, or transformation introduces delay. In operational workflows, even small delays matter.

Reliability degrades.

If one system is slow, stale, or inconsistent, the entire context becomes unreliable. The same query can return different results depending on timing and data availability.

Costs escalate.

Agents often ingest far more data than necessary because isolating the right subset is difficult. At scale, this significantly increases inference costs.

Risk expands.

Broad data access makes it harder to enforce fine-grained governance, masking, and compliance policies consistently.

These are not model limitations.

They are context assembly problems.

More data doesn’t mean better context

A common response is to give AI agents access to more data—more tables, more APIs, more documents.

In practice, this often makes things worse.

Excess data introduces noise. It increases ambiguity. It forces agents to reason over irrelevant or conflicting inputs.

The result is variability, inconsistency, and loss of trust.

In operational AI, success depends not on how much data an agent can access, but on whether it receives the right data, scoped to the specific task and entity involved.

Precision matters more than volume.

The missing discipline

What’s missing in most agentic AI architectures today is a disciplined approach to context:

-

That data is actually required for a given task

-

How current that data needs to be

-

How access should be constrained and governed

-

How context should be assembled before reasoning begins

Without this discipline, context becomes an afterthought—something assembled at runtime instead of designed into the system.

That’s why context tends to break before models do.

What comes next

In the next post, I’ll explore why “more data” is the wrong answer for operational AI—and why production systems need a principled way to scope data access around tasks and entities, rather than broad datasets.

Because in agentic systems, context isn’t just input.

It’s the foundation everything else depends on.