A practical guide to preventing test data bottlenecks in the AI-SDLC

AI-assisted development needs a new test data paradigm

Last updated on May 14, 2026

AI is generating more code and tests than traditional test data management workflows can support. The future of TDM is AI-composed validation data.

01

Key takeaways

-

AI-assisted development is creating much more code, test cases, and application variation, much faster.

-

Test data is becoming the strategic bottleneck because every AI-generated change still needs the right compliant data to be trusted and released.

-

Traditional TDM remains essential, but AI-SDLC workflows require test data that can be determined and delivered from scenarios or natural-language test data requests.

-

QA becomes more strategic as AI blurs the line between development, testing, automation, and validation.

-

The future of TDM is AI-composed validation data – the right compliant data for every scenario, delivered instantly to preserve speed, coverage, and confidence.

02

Why AI-assisted development is turning test data into a strategic bottleneck

AI-Assisted Development (AIAD) means much more code, built much faster – with test data becoming the strategic bottleneck.

AI can now generate code, test cases, and prototypes in minutes. It’s not only accelerating development. It’s expanding who can participate in software creation and how many changes, variants, and test scenarios the enterprise must validate.

But every AI-generated change still needs the right compliant test data before it can be trusted, tested, and released. Without it, teams either wait for data or test less than they should.

As AI-assisted development scales, test data can no longer depend on human-paced requests, predefined datasets, or manual translation of what needs to be tested into data tasks.

The future of TDM is automatically turning every test scenario or natural-language test data request into the right compliant test data, on demand.

03

How is AI changing the volume and shape of software delivery?

AI-assisted development does more than help developers write code faster. It changes the entire scale of the Software Development Life Cycle (SDLC) itself.

A single developer can produce more code in less time. A tester can generate more test cases. A product owner can turn a user story into a working prototype. An external innovation partner can rapidly build proof-of-concepts for new AI-driven use cases.

That creates a very different testing challenge.

Instead of a controlled flow of planned development work, enterprises now face a larger and faster-moving stream of code changes, test cases, edge cases, and application variations. Each change may require a different set of customers, accounts, products, policies, transactions, exceptions, histories, relationships, or synthetic conditions before it can be properly tested.

That’s why AI productivity doesn’t automatically translate into faster software delivery. Analyst Gartner found that while 43% of engineers believe they’re exceeding expectations on software delivery with AI, 0% of engineering leaders agree. The disconnect is telling: AI can accelerate coding, but the surrounding delivery system still has to keep up.

The result is a widening gap between how fast software can now be created and how fast the right compliant test data can be delivered.

AI accelerates development. But without equally responsive test data, validation becomes the rate limiter for the AI-assisted SDLC (AI-SDLC).

04

Why weren’t traditional test data workflows designed for AI-speed development?

Enterprise test data management tools have given development and testing teams secure access to realistic, compliant data for years. TDM remains the foundation for masking sensitive data, provisioning lower environments, generating synthetic records, and reducing the risk of using production data in non-production systems.

But AI-assisted development challenges the test data paradigm.

In a traditional SDLC, test data needs are usually known in advance. A team defines a test case, identifies the required data, requests or prepares the dataset, applies masking or synthetic generation, and provisions it to the right environment.

That process can work when development moves in planned cycles.

But it can’t when AI creates a constant stream of new scenarios and data needs. One test may require a customer with multiple accounts and a failed payment history. Another may need a policyholder with a specific claims sequence. Another may require a synthetic call transcript, a tokenized customer profile, or a precisely masked dataset shared with an external partner.

The issue is no longer simply access to data. It’s the translation gap between what needs to be tested – whether expressed as a formal test case, user story, generated script, or natural-language test data request – and the right combination of source data, masked data, tokenized data, and synthetic data.

That gap:

-

Slows down delivery, because teams wait for data before they can validate AI-generated changes.

-

Limits coverage, because when the right data is hard to get, teams test what they can – not necessarily what they should.

-

Reduces confidence, because more generated code and more generated tests don’t automatically mean better validation.

In the AI-SDLC, the strategic challenge isn’t whether test data exists. It’s whether the right test data can be determined and delivered fast enough to preserve speed, coverage, and release confidence.

05

What is scenario- and request-driven test data?

To keep up with AI-assisted development, TDM must move beyond manually fulfilled data requests.

The new requirement is test data that can be created directly from what needs to be tested.

Sometimes that starts with a defined scenario: a test case, generated test script, user story, acceptance criteria, CI/CD pipeline step, or AI agent instruction. The system should be able to analyze that scenario and determine the data required to test it.

Other times, no formal scenario exists yet. A developer or QA engineer may simply know the data conditions they need and describe them in natural language, the same way they already prompt AI tools to generate code or test cases. For example:

“Give me customers with multiple active accounts, a failed payment in the last 30 days, and no missing KYC data.”

In both cases, the system should determine what data is needed, find or generate it, protect it, and deliver it on demand.

That may mean locating source-connected data across enterprise systems. It may mean applying masking or tokenization. It may mean generating synthetic records or unstructured business artifacts. Most often, it means combining these approaches to produce the exact compliant data required for the scenario.

| From | To |

| Manually defined data requests | Scenario- and request-driven test data |

| Provisioning predefined datasets | Composing the right mix of source-connected, masked, tokenized, and synthetic data |

| Human translation of test needs into data tasks | Automated determination of required data conditions |

| Planned test cycles | AI-speed validation loops |

| Generic available data | Scenario-specific compliant test data |

The next paradigm for TDM is turning every test scenario or natural-language test data request into the right compliant test data, on demand.

06

How does AI change the role of QA?

AI-assisted development is blurring the traditional boundary between development and QA.

Developers can generate tests. QA teams can generate automation scripts, test utilities, and, in some cases, code. Business users and external partners can create prototypes and workflows that also need to be tested. As more roles participate in software creation, quality can no longer depend on a narrow, manual testing process at the end of the SDLC.

AI doesn’t make QA less important. It makes QA more strategic.

When AI can generate code and test cases, QA becomes responsible for governing quality across a much larger and faster-moving delivery system. QA teams need to define which scenarios matter, where risk is highest, which edge cases must be covered, what data conditions are required, and whether the results are reliable enough to release.

The opportunity is an increasingly automated validation loop:

-

Create the code.

-

Generate the tests.

-

Compose the validation data from the right mix of masked source data, tokenized data, synthetic data, and business context.

-

Execute the tests.

-

Evaluate the results.

-

Feed the findings back into the SDLC.

But that loop only works if validation data is automated too.

If the right compliant validation data still depends on manual requests, predefined datasets, or specialist teams translating each scenario into provisioning tasks, the validation loop breaks.

Once AI can generate code and tests, the remaining constraint is validation data.

Automated validation data composition is the final bottleneck to overcome – the capability that turns AI-assisted development into an end-to-end automated validation loop.

07

What are the 6 requirements for AI-SDLC-ready validation data?

To support the AI-SDLC, test data management must move beyond provisioning datasets into lower environments. It must become a validation data capability that can determine, compose, protect, and deliver the right data for every test scenario or natural-language test data request.

That requires 6 capabilities working together:

1. Understand what needs to be tested

The input may be a user story, acceptance criteria, generated test case, automated test script, CI/CD pipeline step, AI agent instruction, or a developer’s description of the data they need.

The user shouldn’t have to know which systems, tables, relationships, masking rules, or synthetic data logic are required.

2. Determine the required data conditions

AI-SDLC-ready validation data must identify the business entities, relationships, histories, transactions, states, exceptions, and edge cases needed to test the scenario properly.

For example, the requirement may not be “give me policy data.” It may be: “Give me auto insurance policyholders with an open claim, a recent address change, and a pending payment adjustment.”

3. Compose validation data from the right mix of sources

Some scenarios require real, source-connected data that preserves business relationships across systems. Others require masked or tokenized data. Others require synthetic data for rare edge cases, missing conditions, or sensitive scenarios that shouldn’t use production data.

In many cases, the right answer is a combination of all of these.

4. Preserve business relationships and realism

AI-SDLC-ready validation data must maintain the relationships that make enterprise data meaningful: customers to accounts, policies to claims, orders to payments, products to subscriptions, cases to interactions, and so on.

If those relationships break, the data may be compliant but not useful. Tests may execute, but they won’t reflect real business conditions.

This is especially important when validation data combines masked source data, tokenized data, and synthetic data. The composed dataset must remain realistic, referentially intact, and fit for the scenario being tested.

5. Protect sensitive data automatically

Validation data must be compliant before it reaches lower environments, automated pipelines, external partners, AI tools, or LLM-based workflows.

Privacy controls can’t be bolted on after the fact or left to individual teams to manage manually.

6. Deliver data directly into the workflow

AI-assisted development moves through prompts, agents, APIs, pipelines, and self-service tools.

Validation data must be available through those same channels, without waiting for tickets, handoffs, or specialized data preparation.

AI-SDLC-ready validation data must do more than supply data. It must compose the exact mix of masked, tokenized, synthetic, and source-connected data required to test every scenario with speed, coverage, and confidence.

08

How does K2view bring AI-composed validation data to the AI-SDLC?

K2view helps enterprises overcome the final bottleneck in AI-assisted development: turning every test scenario or natural-language test data request into the right compliant data package to validate it – instantly.

This builds on the foundation of enterprise TDM: Secure access to real data, data masking, data tokenization, synthetic data generation, and compliant provisioning. But the AI-SDLC requires something more. It requires test data to become validation data – the complete, compliant mix of real, masked, tokenized, synthetic, and contextual data needed to prove whether a scenario works.

That’s where AI-composed validation data comes in.

Instead of asking developers, QA engineers, or data teams to manually define every provisioning, masking, and synthetic data task,

K2view instantly composes the right compliant validation data for each scenario or natural-language test data request. It determines what data is needed, finds or generates it, applies the appropriate privacy controls, preserves business relationships, and delivers it on demand – helping teams validate AI-generated changes quickly while maximizing test coverage.

K2view supports validation data composition in 2 primary ways.

1. Autonomous composition from test scenarios

When a test case, generated script, user story, acceptance criteria, CI/CD step, or AI agent instruction already exists, K2view can derive the required validation data directly from that input.

It identifies the necessary data conditions, accesses source-connected data where appropriate, applies masking or tokenization, generates synthetic data when needed, and delivers the complete compliant data package for testing.

This allows AI-generated tests to move into automated validation without waiting for manual data preparation – accelerating validation while expanding the range of scenarios teams can cover.

2. Conversational composition from natural-language test data requests

When no formal scenario exists yet, developers and QA teams can describe the data they need in plain language.

For example:

Give me retail customers with abandoned carts over $500, at least one recent support interaction, and no active discount applied.

K2view translates that request into the required data conditions, composes the right data package from the appropriate mix of source-connected, masked, tokenized, and synthetic data, protects sensitive information, and makes it available for testing.

This gives test data the same conversational experience teams already use for AI-assisted coding and test generation, so they can validate more scenarios without waiting for manual data setup.

Closing the validation loop

For LLM-centric applications and AI agents, validation doesn’t just stop at executing tests against the right data. Teams also need to evaluate whether the AI system produced the right output.

That means testing prompts, responses, decisions, recommendations, actions, tone, policy adherence, and business correctness against expected outcomes. A customer service chatbot, for example, shouldn’t only retrieve the right customer context. It must respond accurately, follow compliance rules, take the right next action, and avoid exposing sensitive data.

K2view supports this by using business data to generate realistic prompts and evaluate AI system responses against expected outcomes derived from that same data. This helps teams assess correctness, consistency, compliance, politeness, and other business-specific quality criteria.

Together, AI-composed validation data and AI system evaluation close the loop for the AI-SDLC: the right data is composed for every scenario, the test is executed, and the AI-generated output is evaluated against what the business expects.

The future of TDM is AI-composed validation data: instantly turning every test scenario or natural-language test data request into the right compliant data package to validate AI-generated change with speed and maximum test coverage.

08

Conclusion: What is the future of TDM in the AI-SDLC?

AI-assisted development changes what enterprises need from test data management.

TDM remains essential. Enterprises still need secure access to source-connected data, masking, tokenization, synthetic data, compliant provisioning, and integration with lower environments and delivery pipelines.

But the AI-SDLC raises the bar.

When AI can generate more code, more tests, and more application variations in minutes, test data can no longer be the part of the process that waits for manual interpretation, predefined datasets, tickets, or handoffs. It must become part of the automated validation loop.

That means turning every test scenario – or every natural-language test data request – into the right compliant data package instantly. The right mix of masked source data, tokenized data, synthetic data, and business context. The right relationships preserved. The right privacy controls applied. The right data delivered into the workflow, when and where it’s needed.

That’s how enterprises move from AI-assisted coding to AI-assisted delivery.

Not just faster code generation. Not just more test cases. Not just automation around the same old data constraint. But a new test data paradigm built for speed, coverage, and confidence.

The future of TDM is AI-composed validation data: The right compliant data for every scenario, delivered instantly so AI-generated software can be validated as fast as it’s created.

Talk to K2view to learn how your test data strategy can support the AI-SDLC today.

09

FAQs

1. What is AI-composed validation data?

AI-composed validation data is the complete compliant data package needed to validate a specific test scenario or natural-language test data request. It may include source-connected data, masked data, tokenized data, synthetic data, unstructured artifacts, and business context, assembled for the scenario being tested.

2. How is AI-composed validation data different from synthetic test data?

Synthetic test data is one possible ingredient. AI-composed validation data is broader. It may combine masked source data, tokenized data, synthetic records, unstructured artifacts, and preserved business relationships to create the right compliant data package for a specific scenario.

3. Does AI-composed validation data replace TDM?

No. It builds on TDM. Enterprises still need masking, tokenization, synthetic data generation, compliant provisioning, and access to source-connected data. AI-composed validation data extends those capabilities so they can support AI-speed development and automated validation.

4. Who uses AI-composed validation data?

Developers, QA engineers, test automation teams, platform teams, and external innovation partners can all use AI-composed validation data. Some may start from formal test cases or generated scripts. Others may simply describe the data conditions they need in natural language.

5. Where does AI-composed validation data fit in the SDLC?

It fits wherever software needs to be validated: local development, lower environments, automated testing, CI/CD pipelines, partner sandboxes, and AI application evaluation workflows. The goal is to make compliant data available at the same speed as AI-generated code and tests.

6. How does AI-composed validation data support compliance?

It applies privacy controls such as masking, tokenization, or synthetic data generation before data reaches lower environments, AI tools, external partners, or automated pipelines. This helps teams validate faster without expanding sensitive-data exposure.

7. What’s the difference between test data and validation data?

Test data is the familiar TDM term for data used in software testing. Validation data is broader. It refers to the full compliant data package – including relationships, context, masked or tokenized elements, synthetic data, and sometimes unstructured artifacts – needed to prove whether a scenario or AI-generated output works correctly.

Complimentary DOWNLOAD

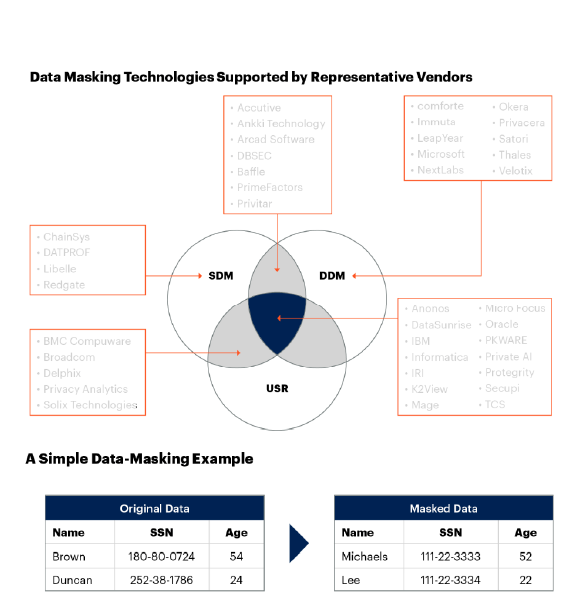

Free Gartner Report: Market Guide for Data Masking

Learn all about data masking from industry analyst Gartner:

-

Market description, including dynamic and static data masking techniques

-

Critical capabilities, such as PII discovery, rule management, operations, and reporting

-

Data masking vendors, broken down by category