A practical guide AI Data Governance

AI data governance for agentic systems

Last updated on April 16, 2026

Traditional AI data governance was built for models. Agentic systems need governance for runtime context, high precision, and controlled action.

01

Key takeaways

-

Traditional AI data governance focuses on datasets, pipelines, and model controls.

-

Agentic systems introduce a governance challenge at runtime.

-

Data products define the governed context foundation; data agents enforce runtime control for each request, user, entity, and task.

- The core issue is controlling the context AI receives for a specific task.

- More data usually creates more noise, risk, and cost.

- Agentic systems need task-scoped, entity-scoped, and policy-controlled context.

02

AI data governance is changing

For years, AI data governance referred to the familiar set of controls around data quality, privacy, access, lineage, and compliance in the context of AI. These controls still matter. But agentic AI have changed the scale of the challenge.

Agentic systems don’t just consume governed datasets. They assemble context in real time from prompts, documents, APIs, operational systems, tools, and memory. That context determines what the AI knows, how it responds, and when it can take action.

That’s why AI data governance can no longer focus only on source data and model inputs. It also must govern runtime context.

1. What is traditional AI data governance?

Traditional AI data governance is the practice of controlling how data is accessed, protected, and used in AI systems. That typically includes:

- Data quality and consistency

- Privacy and compliance

- Access control and security

- Lineage and traceability

- Policies for model use

This definition still works for analytical AI, where data usually moves through relatively stable pipelines. But it becomes incomplete with GenAI and agentic AI, where context is assembled dynamically during live interactions.

2. Why AI governance must change for agentic systems

The main shift is that the unit of control changes.

Traditional AI data governance is centered on datasets. Agentic AI introduces a second layer centered on runtime context.

An agentic system may retrieve information from multiple enterprise sources, combine structured and unstructured data, use tools, and trigger downstream actions. In that kind of workflow, the key question is no longer just whether the source systems are governed. It is whether the AI received the right context for the task, under the right controls, before it reasoned or acted.

That’s very different than classic data governance.

03

Why runtime context matters in AI data governance

Context determines behavior.

If the context is incomplete, the output is unreliable. If it is stale, the decision may be wrong. If it is too broad, the system becomes noisy, expensive, and harder to control. If it includes sensitive data that should have stayed out, governance has already failed.

Imagine an AI agent handling a billing dispute

The task may be to review the latest bill, identify the disputed charge, explain it, and, if policy allows, issue a credit or open a follow-up workflow.

To do that safely, AI agents may need:

-

The customer’s current account and plan details

-

The latest invoice and payment status

-

Usage records tied to the disputed charge

-

Any recent plan changes or promotions

-

Open tickets or prior dispute history

-

The current refund or credit policy

-

The action limits assigned to that agent

This is where runtime context governance matters

The agent should not get broad access to the customer’s entire history, unrelated household accounts, or sensitive identity fields it does not need. It should receive only the context required for this billing dispute, for this customer, at this moment.

That means governance must control:

- Task scope

The agent gets only the data needed to resolve a billing dispute. - Entity scope

The context is limited to the specific customer account involved. - Freshness

Billing, usage, payment, and ticket data must reflect current state. - Policy enforcement

Sensitive fields are masked, restricted data is excluded, and refund rules are applied before the agent reasons. - Action control

The agent may explain charges and recommend a credit, but only issue one if policy conditions are met. - Traceability

The enterprise can see what context was assembled, what checks were applied, and why the action was taken.

Without that governance, the same agent could pull too much data, miss a recent payment, expose sensitive information, or approve a credit it was not authorized to issue.

Runtime context is the real issue because it sits at the point where data turns into behavior.

04

Why is more data usually a mistake?

A common instinct in enterprise AI is to widen access when performance falls short. More tables. More documents. More APIs. More history.

That often makes the system worse.

More data increases ambiguity, token cost, privacy exposure, and governance complexity. It gives the model more chances to reason over irrelevant or conflicting information.

Agentic systems do not need maximum context. They need precise operational context.

That means the AI should receive only the information required for the specific task, for the specific entity involved, with the right controls already applied.

05

What should AI data governance for agentic systems include?

For agentic systems, AI data governance should include 5 core controls:

-

Task-based access

The AI should access only the data required for the task it is performing. -

Precisely scoped context

The AI should receive context limited to the customer, claim, order, account, or device involved in the task. -

Runtime policy enforcement

Privacy, masking, compliance, consent, security policies, and access controls should be enforced at runtime, based on the task, user, agent, entity, and operational instance. -

Freshness and state awareness

Operational AI must work from current state, not just historical records. -

Controlled action and traceability

If the system can trigger workflows, update records, or call tools, those actions must be audited and traceable.

Together, these controls define a stronger model for AI data governance for agentic systems.

06

Why traditional data governance can’t keep up

Most enterprise data governance programs were built to control data stores, access rights, privacy rules, lineage, and compliance. Those controls still matter, but they were designed for relatively stable data flows and known access patterns.

Agentic systems introduce a whole new challenge. They assemble context dynamically across multiple sources, for a specific task, in real time. That means the governance problem is no longer just about whether source data is classified or protected. It’s also about whether the right context is assembled, under the right constraints, before the AI reasons or acts.

This is where traditional data governance falls short. It governs data sources better than runtime context. It’s focused on access to systems rather than control over task-specific context. For example, it can tell you who may access a table or application. But it’s less effective at deciding what an AI system should receive to settle a live customer dispute, claims review, or service action.

The challenge is no longer just governing data at rest. It’s governing how context is assembled in motion.

07

Agentic systems need runtime context governance

The real enterprise AI challenge is not broad data access. It’s controlled context assembly across fragmented systems.

From that perspective, agentic systems need a data layer that can:

-

Unite fragmented data from many sources.

-

Organize it around business entities.

-

Deliver only the data required for the task.

-

Apply governance before the AI reasons.

-

Support safe, governed action into systems of record.

That’s where K2view comes in.

1. Market demand for better governance for GenAI

Our 2026 State of Enterprise Data Readiness for GenAI survey indicates that 76% of organizations say guardrails around the effective and responsible use of GenAI are a top obstacle to production deployment.

Furthermore, only 13% of the companies surveyed have enforced technical controls preventing sensitive data from entering GenAI or LLM systems.

The gap is clear. Enterprises know governance matters, but many still have not operationalized it at runtime.

2. The shift to runtime data governance

As previously mentioned, data governance was once mainly concerned with data before it reached the model. The focus was on classification, access rights, data masking, lineage, and compliance across source systems and pipelines. That approach still matters, but it assumes data usage is relatively predictable.

Agentic systems are not predictable. They assemble context dynamically, pull from multiple sources, and may move from retrieval to action in a single flow.

That means governance can no longer stop at the source or policy level. It has to shape what context is assembled for the task, what data is excluded, what policies are enforced before reasoning, and what actions are allowed afterward.

Runtime data governance extends traditional governance into the operational realm where agentic systems succeed or fail.

The question is no longer about whether the underlying data is governed. It’s now about whether the AI received the right context, under the right constraints, in time to produce a safe and reliable outcome.

3. The role of data agents in runtime governance

Unlike AI agents, data agents govern execution in the data control plane.

An AI agent interprets the task, reasons through the business objective, and decides what needs to happen next.

A data agent provides the controlled data access and action layer behind that reasoning. It retrieves approved, entity-specific context from the data product layer, supplies that context to the AI agent, and performs authorized updates or actions through governed interfaces.

This distinction matters because governance operates at 2 levels.

At the data product level, governance is built into the data foundation itself. Entity-centric data products define the structure of the business entity, capture ownership and lineage, apply data quality and masking rules, and expose approved ways to read and update data. This establishes the governed context that AI agents can rely on.

At the runtime level, data agents enforce the rules for each interaction. They evaluate the request in context: Who is asking, which AI agent is involved, what entity is in scope, what task is being performed, what consent and privacy rules apply, and which security policies must be honored. Based on that evaluation, the data agent determines which context can be shared and which actions can be executed. Each interaction is also captured for audit and traceability.

Put simply, data products define the governed possibilities. Data agents decide what is permitted at any given moment.

This keeps data governance out of the AI agent’s chain-of-thought reasoning process. Rather than relying on every AI agent implementation to interpret policies correctly, control is applied consistently and deterministically by the data layer.

AI agents reason.

Data agents govern access, action, and auditability.

08

Conclusion

AI data governance must become more operational.

For traditional AI, governance focused on datasets, pipelines, and model controls.

For agentic systems, it must also govern runtime context. That means controlling what information is assembled, how it is scoped, what policies are enforced before reasoning, and whether the system is allowed to act on the result.

That’s why the next stage of AI data governance is not better policy, but better operational context.

09

FAQs about AI data governance for agentic systems

How is runtime data governance different from data security?

Data security focuses on protecting systems and data from unauthorized access, loss, or misuse. Runtime data governance is narrower and more operational. It controls what context an AI system is allowed to receive for a specific task, what policies apply before reasoning, and what actions are allowed afterward.

Does runtime data governance replace traditional data governance?

No. Traditional data governance is still necessary. Enterprises still need classification, lineage, privacy controls, ownership, and compliance policies. Runtime data governance builds on that foundation and applies those controls at the point where an AI system assembles context and acts on it.

What kinds of agentic AI use cases need runtime data governance most?

It matters most in operational use cases where AI interacts with live business processes, such as AI customer service, claims handling, billing disputes, fraud review, service operations, loan processing, and employee support. These are cases where bad context can lead to bad actions, not just weak answers.

Why are policy-based controls alone not enough for agentic systems?

Policy-based controls are not enough if they depend on each AI agent to interpret and apply governance consistently. In agentic systems, governance must be enforced deterministically at runtime.

Entity-centric data products define the governed foundation, including ownership, lineage, masking, quality rules, and approved interfaces. Data agents then evaluate each request against the user, AI agent, task, entity, permissions, consent, privacy rules, and security policies to determine what context can be delivered and which actions are allowed.

In short, data products define what is possible; data agents determine what is permissible.

How do I evaluate a runtime data governance solution?

Look at whether it can scope context to the task, enforce policy before reasoning, limit unnecessary data exposure, work with current operational state, and support traceability for both context and actions.

Complimentary DOWNLOAD

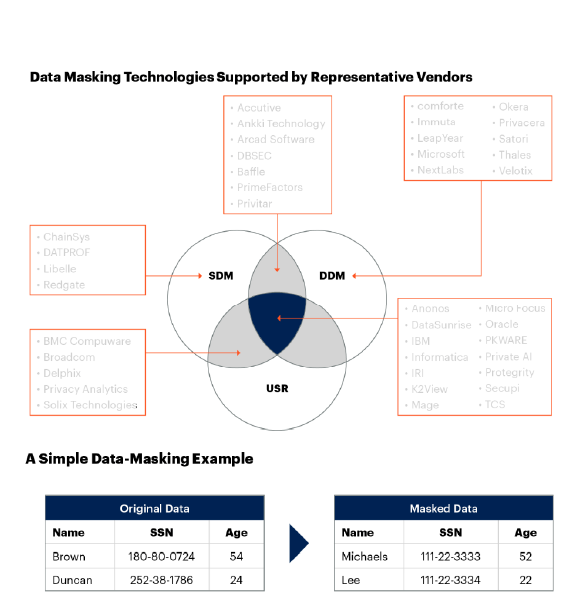

Free Gartner Report: Market Guide for Data Masking

Learn all about data masking from industry analyst Gartner:

-

Market description, including dynamic and static data masking techniques

-

Critical capabilities, such as PII discovery, rule management, operations, and reporting

-

Data masking vendors, broken down by category